1. Data

The first dimension was data. The military had their firing tables. People like Diebold envisioned data processing. All input and output data were private to the machine and user doing the computation. This was scaling up, mostly through dollars; it reminds me of the work on the moon landing.

- One machine

- Big-iron super computers

- Bigger memories, multi-level caches

- Multi-processor concurrency, high-speed interconnect

- Vast storage arrays

- Monolithic

- Reliability through expensive, redundant hardware

2. Machines

The second dimension was machines. It was made possible by high-speed networking and dropping prices. Instead of scaling up this was scaling out-- as wide as possible. This has led to infrastructure as a service and the end of monolithic vendors. This effort is still underway, mostly solved, and the future holds efficiency gains more than raw performance improvements. Interestingly, in this dimension the input data is often public and the output data is also semi-public (probably the web's influence).

- Commodity hardware

- Three-tier architecture (frontend, appserver, database)

- Use a bigger DB machine and cheaper stateless nodes

- Consistent caching for high-throughput reads

- Vertical partitions

- Split MySQL into parallel DBs; overcome the limits of a single machine

- Reliability through master/slave replication

- Hot shards and failures handled manually

- Distributed data

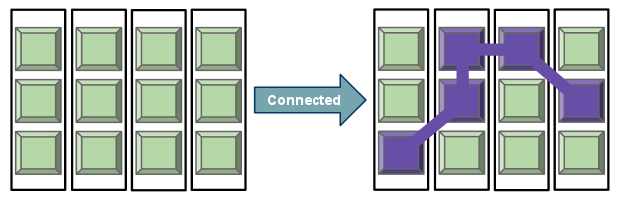

3. Views

The third dimension is views: unique perspectives on shared data where attributes vary based on who or what is viewing. Its necessity came in part from personalization and socialization on the web, but also multi-tenancy, hosted services, and growing datasets. For this dimension the input data is both public and private and the output data blends. Caching of shared, public views isn't nearly as effective. The scaling challenge is connecting disparate, multifaceted information.

- Personalization

- Offline analysis of past history and behavior to derive new recommendations

- Blend user's analyzed preferences with global data, common signals

- Work done at query time, sometimes cacheable, often results are one-time-only

- Broadcast messaging

- Fan-out of messages to per-user consumption feeds

- Work done at write-time (Twitter), read-time (Facebook), or both

- Message destinations are contacts, groups for access control

- Filtering and correlation

- Search by keyword, geospatially across public and private data in real time

- Cross-publisher integration with access control and immediacy

- Relevance computed for new information at moment of collection/creation

I'm not sure if the "third dimension of scaling" is the perfect phrase, but I think it's good for describing where we've been and where we're going.